Sometimes, games have to render highly dynamic geometry such as sparks, bullet trails, muzzle flashes and lightning arcs. Sometimes it’s possible to off-load the work of simulating these things to the GPU, but there are effects than can’t be done by the GPU alone.

These cases usually occur when effects require heavy interaction with level geometry or when they require lots of conditionals to mutate a persistent effect state. And sometimes, the effort of simulating an effect on the GPU is just not worth the results. If you have maybe a hundred instances of a bullet trail at once, letting the GPU orient the constrained billboards for the trails instead of generating the vertices on the CPU might just not yield any tangible benefits.

However, there are still a lot of traps you can run into. A typical mistake of the

unknowing developer is to render the primitives one-by-one either using one

Draw[Indexed]UserPrimitives() call per spark/trail/arc.

This is not a good idea because modern GPUs are optimized for rendering large numbers

of polygons at once. When you call Draw[Indexed]UserPrimitives(), XNA will

call into Direct3D, which will cause a call into driver (which means a call from code

running in user mode to code running in kernel mode, which is especially slow). Then

the vertices are added to the GPU’s processing queue.

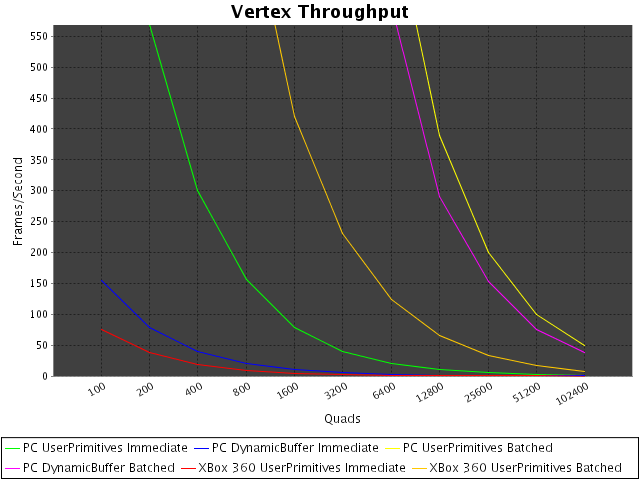

By sending single primitives to the GPU, this per-call overhead is multiplied and can become a real bottleneck. To demonstrate the effects of making lots of small drawing calls, I wrote a small benchmark and measured the results on my GeForce 8800 GTS 512 and on whatever the XBox 360 uses as its GPU.

To render such dynamic geometry efficiently, you need to collect the primitives into

larger batches. For example, by appending them to an array until it is full and then

doing a single DrawPrimitive() call, sending the entire array of

primitives to the GPU.

This introduces several new problems, however. If your effects are rendered using

different textures or even different effects, you can not use a single

DrawPrimitive() call. You will have to create multiple batches, one for

each texture and/or effect you’re rendering with. And if you want to preserve the

drawing order (in case you’re drawing without a depth buffer), you can not just use

a dictionary to have one batch for each texture/effect, you need to keep them ordered

and break the batch each time a different texture/effect is used.

This essentially is what XNA’s SpriteBatch class does for you.

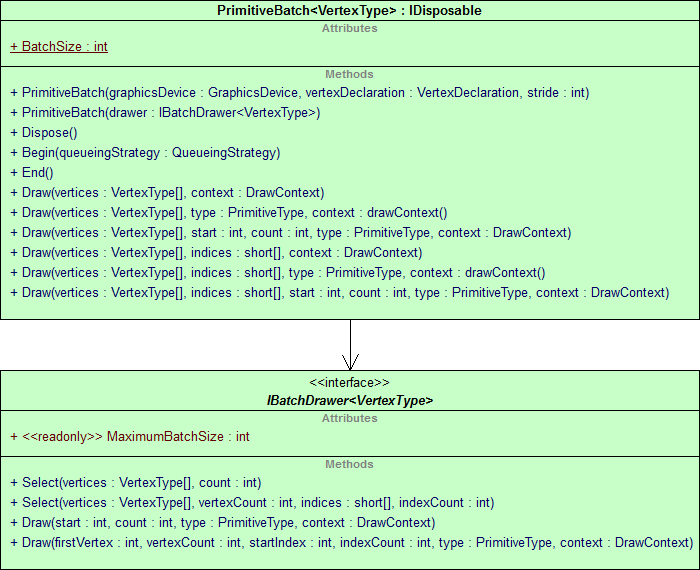

In my latest game, however, I needed to render 3D primitives and the

SpriteBatch can only do 2D stuff. So I had to create my own

SpriteBatch, which had to work with any custom vertex structure I might

come up with. I called this new class PrimitiveBatch to stay within

XNA’s naming schemes but reflect that it was not limited to sprites.

It took me several evenings of pedantic, brain-melting coding and refactoring to support all the different primitive types without any off-by-one errors which might lead to a primitive not drawing at all or, even worse, all further primitives to be off by one vertex causing triangles to connect the wrong vertices. In that regard, unit testing really helped a lot.

First I needed to design the PrimitiveBatch so that my unit tests could

capture what it would send to the GPU:

PrimitiveBatch might encounter. That uncovered quite a few

problems hiding in the code and waiting to be tripped in my game at unexpected times.

Luckily, after fixing them all and integrating the PrimitiveBatch into my game,

not a single issue has surfaces and everything draws just as it did before with the

individual DrawIndexedUserPrimitive() calls, just way faster :D